ChatGPT will not replace writers...unless we let it

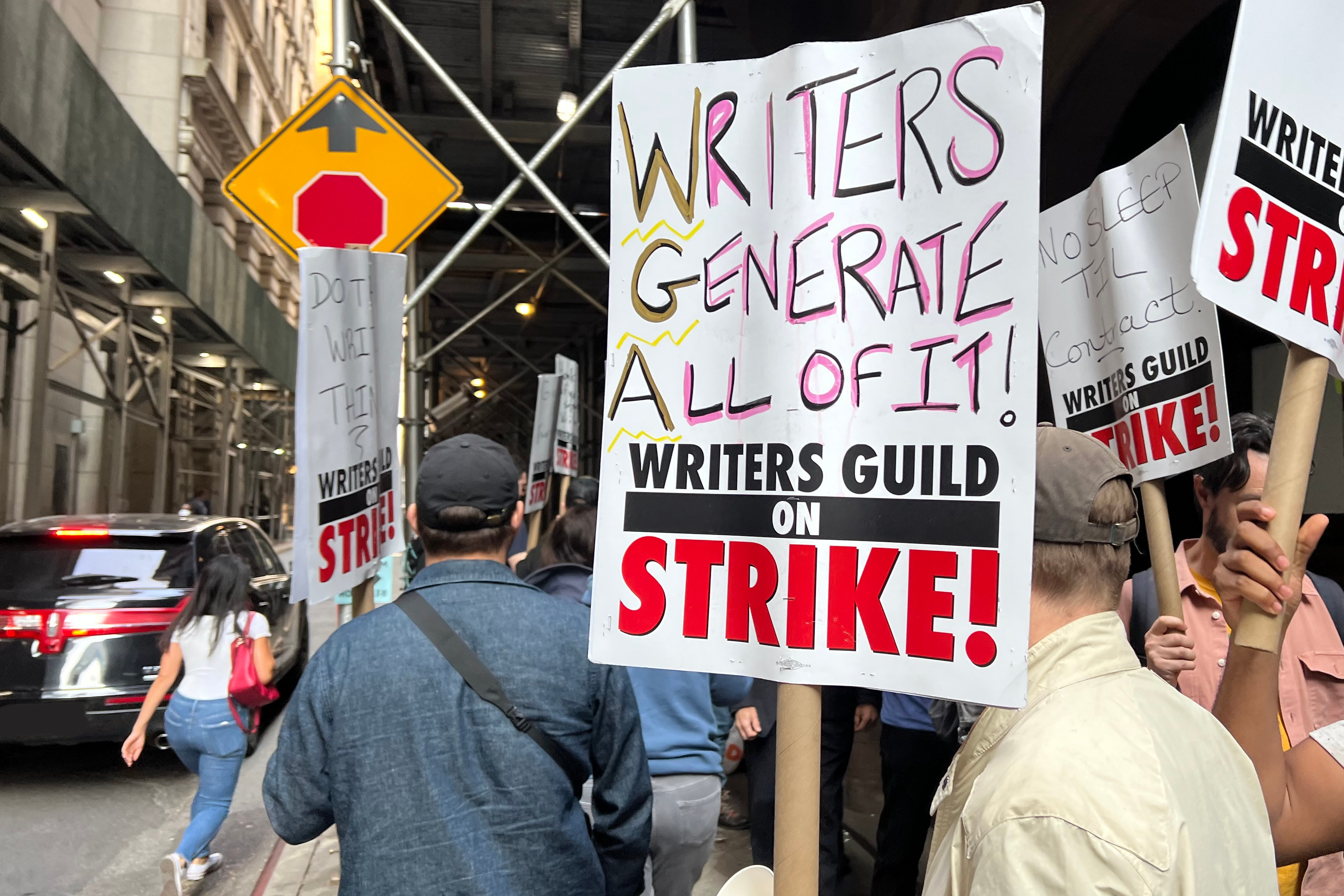

It looks like the Writers Guild (the union for people who write movies and TV shows) is striking again, this time because of ChatGPT.

Hi, I’m Lara. I wrote my dissertation on using AI to automatically generate stories, so I have some opinions about using AI to write stories. I also spoke to my wife, Cassie Kent—who does human-robot interaction—about this, and this blog post is an amalgamation of our thoughts.

So what do we think? Namely: stop replacing writers with AI.

I’m not surprised this is happening and I’m 100% on the writers’ side. But I wanted to make this blog post to hash out some of the nuances of using ChatGPT (or other large language models; I’ll use “ChatGPT” as a shorthand) for creative tasks such as writing. (If you’d like a quick primer into what ChatGPT or a large language model is, check out my blog post from last year.)

But I digress.

Beyond the issues of equal pay and ethical treatment of writers, movie studios are missing the big picture. Movie generation using AI only to write stories is not going to hold up long term. ChatGPT cannot replace writers because they’re not going to be able to tell the types of stories that people want to hear. That is, people have stories to tell and AI lacks the lived experiences to tell those stories. (If you come away with anything from reading this post, this is it.)

But what if people only want movies/art for entertainment and not communication?

Even if you think of AI-created art as entertainment only and not telling some personal story, it might be able to crank out a few okay stories until people will begin to realize they’re getting the same story over and over again and things would get really boring.

You see, ChatGPT works off of likelihoods, and so it’s like it’s playing tug of war between being either coherent or unique. If we pick out really likely words, it’s going to want to spit out the most likely (i.e., boring) story possible. On the other hand, if you pick out really unlikely words, it’s going to be unique but unintelligible or at least forget what it’s talking about over time. So it’s less like “haha that was so interesting and clever” and more like “haha the AI really has no idea what it’s talking about”.

Now, even though Hollywood thinks we want the same movies over and over again, if we actually got the same movies, people would stop paying for movies. They will start to tune things out or get bored. And over time, directors and actors will refuse to work with those scripts.

Furthermore, culture changes over time and ChatGPT struggles with the intricacies of culture already. It would not be able to reflect the diverse experiences that we have as humans. Things that we thought 20/30 years ago are quickly becoming outdated (or honestly even 3 years ago - e.g., COVID). Humor is something that AI struggles with already and it changes very quickly and is extremely culture-specific.

Also, current entertainment already has a huge problem with representation. Older shows and movies have a much bigger problem. We can’t expect AI to fix this without human writers representing these perspectives and moving things forward.

So should we just stop using ChatGPT for writing scripts?

No, I don’t think so, and I’m not just saying that because I’m working on these tools.

I believe that AI and people collaborating is where we find our strengths. Computers are great at processing a large amount of data and humans are great at making connections between ideas. Also, what is AI if not to either 1) help people or 2) get a glimpse into understanding how cognition works? (“People” here can also be animals.)

Plus, having these tools available means that people with less writing experience could write a decent script or that experienced writers could write scripts in a shorter period of time.

Some upcoming issues with using AI in writing

That said, because these tools are proprietary, there is a big concern about access. Will only high-end studios have access to these tools that can help their writers? Will studios continue to be conservative with their money and figure: “I’m already paying a person to write, I don’t want to pay for ChatGPT too”?

I don’t know enough about the writing process but I can certainly see a future where AI will decrease the amount of writers for a particular movie or show—not eliminate them completely. This presents risks to covering the diversity of peoples’ experiences that we should be aware of and try to mitigate. (However, with fewer people on one team you might be able to have more projects going on at the same time as long as other parts of the movie-making process also become cheaper.)

I don’t have the answers to these questions, and we don’t exactly know how things are going to turn out, but it’s good to be aware of what could happen so that we can be prepared.

Parting Thoughts

The people who make these technologies should take responsibility for the good and the bad that they put out into the world.

Even though the automated generation of stories might not be as good as human-written stories, capitalism might not recognize this (but it should because using only AI won’t be profitable long-term) and that’s why writers strikes are important so that we can keep pushing back.

If you’d like to hear more about AI’s involvement in art, check out this blog post by my friend Kory Mathewson and this article by Louis Anslow.